Idea 1

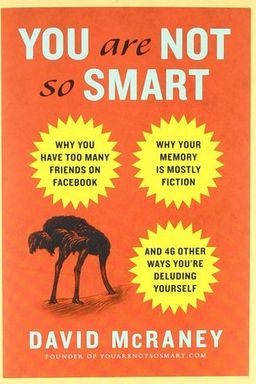

You Are Not So Smart: How Everyday Thinking Deceives You

Have you ever been certain you were right—only to realize later that you were completely wrong? In You Are Not So Smart, David McRaney upends the comforting notion that you are a rational, consistent, and objective creature. He argues that your mind is full of invisible biases, shortcuts, and flawed assumptions that shape everything you believe, remember, and do. You don’t make decisions based solely on logic; instead, you constantly rewrite reality so you can feel good about yourself.

The book draws from decades of cognitive science to show that the human brain is a master confabulator—a storyteller spinning narratives to maintain an illusion of control and coherence. McRaney blends sharp humor with insights from psychology, using real experiments to demonstrate just how easily your mind can trick itself. His central message is bold yet liberating: recognizing your delusions isn’t humiliating—it’s empowering, because only then can you start thinking more clearly.

The Mind: A Storytelling Machine

McRaney begins by explaining that your mind creates meaning from chaos through constant storytelling. You take dangerous shortcuts to keep your self-image intact, telling yourself comforting lies about why you chose what you chose or why you believe what you believe. In reality, much of your decision-making is unconscious. Phenomena like priming—when simple cues influence your behavior without your awareness—reveal that even your smallest actions are often triggered by subliminal suggestions.

For instance, participants exposed to words associated with old age (“wrinkled,” “Florida,” “gray”) unconsciously walked slower afterward. This subtle manipulation shows that you are constantly interpreting and reacting to the world from a script your brain writes, not from logical reasoning. McRaney reminds you that you are always of “two minds” — one emotional and automatic, the other rational and deliberate — but it’s the emotional brain that usually wins.

Why Self-Deception Is Essential

One of McRaney’s most paradoxical insights is that some delusion is not only inevitable but necessary. Your mind needs certain illusions—about your competence, your control, your morality—to function without paralyzing anxiety. Without these built‑in distortions, you might freeze up or despair at your limitations. He calls this our “psychological immune system.” Yet this same mechanism blinds you from recognizing mistakes, makes you overconfident, and traps you in toxic mental patterns like procrastination and confirmation bias.

For example, you convince yourself that your opinions are well-researched (confirmation bias), that your failures were caused by bad luck instead of your own errors (self-serving bias), and that other people notice your flaws far more than they actually do (the spotlight effect). Your self‑image depends on these inaccuracies, because questioning them feels threatening. As McRaney jokes: “Feeling good about yourself is mostly self‑delusion. But it’s useful self‑delusion.”

Patterns, Illusions, and Cognitive Shortcuts

At the heart of the book lies the idea that your brain hunts for patterns—even when none exist. This pattern-seeking nature once kept humans alive in dangerous environments, helping them recognize predators, poisons, or allies. But in the modern world it gives rise to apophenia (seeing connections where there are none), conspiracy theories, superstition, and pseudoscience. Because the brain craves coherence, it constructs stories that feel true even when they’re demonstrably false.

To keep up with the overwhelming number of decisions you face daily, your mind uses heuristics—mental shortcuts that allow speedy judgments but often lead to irrational conclusions. The availability heuristic, for instance, makes you overestimate how common dramatic events are because the media keeps them vivid in your mind. If you see one shark attack on the news, you assume the ocean teems with man‑eaters. Your statistics are emotional, not numerical.

Illusions of Memory and Control

McRaney also dismantles your faith in memory. Every act of remembering reconstructs a story rather than replaying an objective record. Experiments by Elizabeth Loftus show that a single misleading word like “smashed” instead of “bumped” can make witnesses “remember” nonexistent broken glass. Your memories are flexible—constantly rewritten to fit new information or your current emotional state. This means that your autobiography is a movie “based on true events,” not a documentary.

Just as your memory deceives you, so does your sense of control. You believe you can predict outcomes and steer life through willpower, but random chance and complexity often rule. The more competent or powerful you feel, the greater your illusion of control—which explains why gamblers press harder on dice or CEOs overestimate their influence on markets. As McRaney wryly notes, “The future is a billion rolls of the dice, and you think you can load them.”

From Illusion to Insight

Ultimately, You Are Not So Smart isn’t a book designed to shame you—it’s an invitation to humility. McRaney argues that true wisdom begins when you accept that your brain is a kludge, an ancient machine adapted for survival rather than truth. By learning how biases like groupthink, conformity, and the just‑world fallacy operate, you can counteract them with curiosity and skepticism. Awareness doesn’t abolish delusion, but it gives you distance from it. And that distance, McRaney suggests, might be the closest thing to smart we can ever get.