Idea 1

The Illusion of Knowing

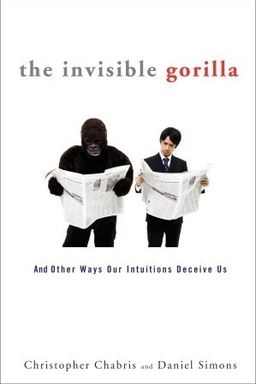

How much of what you think you know is actually true? In The Invisible Gorilla, Christopher Chabris and Daniel Simons reveal that your mind systematically deceives you. You believe you see and remember more than you do, understand complex things you don’t, and can trust your own confidence as proof of correctness. These are not occasional lapses—they are built-in illusions that shape everyday choices, relationships, and decisions.

The authors argue that your intuitive self-image—of being observant, rational, and knowledgeable—is misleading. You are not irrational, but your mind applies shortcuts evolved for survival to environments far more complex than those where they originated. These shortcuts create powerful yet false feelings of certainty. Recognizing these illusions is the first step toward wiser judgment and better decision-making.

The Six Core Illusions

Chabris and Simons organize their argument around six “everyday illusions”: attention, memory, confidence, knowledge, cause, and potential. Each arises because the brain operates efficiently but selectively. You notice only a fraction of what you see; you record fragments rather than full memories; you rely on confidence and intuition as proxies for truth; you assume familiarity equals understanding; you infer causes from patterns; and you overestimate the ease of unlocking mental potential. Together these illusions produce a seamless—but often inaccurate—experience of reality.

For example, the famous “gorilla experiment” revealed inattentional blindness: when focused on counting basketball passes, half of participants missed a man in a full-body gorilla suit walking through the scene. Vision without attention produced blindness. That same mechanism explains why drivers miss motorcycles at intersections or why experienced pilots can overlook another aircraft on the runway.

Memory deceives in equally striking ways. Your recollections are not recorded movies but reconstructed stories that blend fact, inference, and bias. Experiments like the Deese–Roediger–McDermott test show that you easily “remember” words that fit a theme but never appeared. In real life, this makes eyewitness testimony fallible and emotional flashbulb memories unreliable. The vividness of memory is not evidence of its truth.

Why Confidence Misleads

You are wired to mistake confidence for competence. Across domains—from chess to medicine to juries—people treat confident speech as proof of ability. Yet research by Kruger and Dunning shows that those who know the least are often the most confident about their performance. Feedback rarely corrects this because metacognitive skill grows with expertise. Confident eyewitnesses, politicians, or executives can inspire trust while being wrong. Groups worsen the distortion: assertive speakers seize leadership even when their judgment is poor, and shared discussion inflates collective confidence without matching accuracy.

The Illusion of Understanding and Technology’s Trap

The “illusion of knowledge” extends to tools, technology, and projects. You can ride a bicycle or use your smartphone yet be unable to explain how they work. The same illusion plagues experts forecasting gene counts, city budgets, or construction schedules. The antidote is the outside view—anchoring your predictions in the track record of past, similar efforts instead of imagining unique success. Technology deepens the illusion when it gives information without feedback. Head-up displays make pilots slower to notice hazards, GPS systems lure drivers into wrong turns, and data dashboards tempt investors to chase noise instead of signal. In judgment, feedback matters more than information volume.

Patterns, Causes, and Stories

Humans are pattern detectors. That capacity made survival possible but now produces causal illusions. You detect faces in clouds (pareidolia) and assume sequences imply connections. The Cincinnati measles outbreak and the global vaccine–autism scare demonstrate how storytelling reinforces false causal links. When autism symptoms appear after vaccination, temporal proximity feels causal, but large epidemiological studies show no relationship. Anecdotes—vivid and emotional—override statistics because your mind is built for narrative coherence, not probabilistic reasoning.

Similar mechanisms underlie many popular myths, such as the “Mozart effect.” A small lab finding about mood-driven test gains became a worldwide claim that classical music boosts intelligence. The replication evidence erased the miracle but arrived too late to stop the industry. Your mind prefers stories that promise control, quick remedies, and clear causes. Scientific restraint—demanding replication, comparing alternative explanations, and seeking feedback—provides the only reliable defense.

The Path to Cognitive Humility

Ultimately, the book calls for humility about perception and knowledge. Attention is finite; memory is reconstructive; intuition needs calibration; understanding is partial; patterns are seductive; and effortless potential is a myth. Instead of despairing, you can treat awareness of these limits as strength. Design environments that expose blind spots, seek objective feedback, and test causal claims systematically. Simple habits—checking assumptions, comparing with base rates, collaborating with others who see differently—turn illusions into learning tools.

Core lesson

You trust your mind too much because it feels accurate from the inside. True wisdom comes from distrusting that feeling just enough to test, verify, and adjust it.

By exposing the hidden flaws behind everyday confidence, The Invisible Gorilla offers not cynicism but self-awareness—a realistic picture of human cognition that allows better attention, safer design, fairer judgments, and more grounded understanding of ourselves.