Idea 1

The Evolution of Human‑Machine Partnership

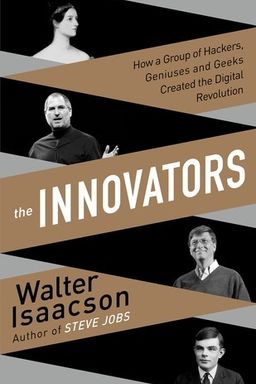

The history of computing is not simply the story of faster machines. It is the story of how people imagined, built, and then reimagined tools that amplify thought. Across two centuries, visionaries—from Ada Lovelace to Alan Turing, Grace Hopper, Douglas Engelbart, Tim Berners‑Lee, and modern AI designers—transformed the idea of computation from mechanical arithmetic into human‑machine symbiosis. This book traces that arc: invention; abstraction; electronic revolution; programming; networks; and digital culture.

From Calculation to Imagination

Ada Lovelace planted the first conceptual seed. Working with Charles Babbage’s Analytical Engine in 1843, she recognized that a machine could manipulate symbols, not merely numbers. Her published algorithm for Bernoulli numbers anticipated loops, conditional statements, and even reusable code libraries. Her “poetical science” balanced rigor with imagination—she believed that how machines extend human creativity matters as much as what they compute. Alan Turing later formalized this leap: his universal machine proved that any calculation could be simulated if described correctly, making programmability the essence of computing.

Theory Meets Engineering

In the 1930s, Claude Shannon showed how Boolean logic could become electrical circuits—bridging abstraction with hardware. Meanwhile, wartime needs pushed these theories into practice. Mechanical “calculators” evolved into vacuum‑tube machines that crunched trajectories and decrypted codes. When John von Neumann articulated the stored‑program architecture, software took center stage. Grace Hopper and the women of ENIAC invented frameworks—subroutines, debugging, compilers—that carved out the human side of hardware: how to express intention to a machine.

Electrons, Transistors, and Silicon Ecosystems

Bell Labs’ transistor breakthrough (1947) miniaturized and accelerated computation. William Shockley’s later managerial failure in Palo Alto paradoxically birthed Silicon Valley, as the “traitorous eight” formed Fairchild Semiconductor and paved the way for the venture‑capital model. Then Robert Noyce and Jack Kilby solved the “tyranny of numbers” with integrated circuits, allowing chips to scale. Gordon Moore’s 1965 observation—that transistor density would double regularly—became a guiding prophecy for planning technological growth. Intel’s founders, Noyce, Moore, and Andy Grove, built a culture that united autonomy, technical excellence, and operational intensity—a living alloy of leadership.

Programming the World

With the microprocessor’s birth (Intel 4004, 1971), computation became portable and programmable. Ted Hoff’s insight—to replace many specialized chips with one general‑purpose processor—redefined economics: software could now define hardware behavior. That pivot empowered the hacker culture at MIT and entrepreneurs at Atari to discover interactive computing’s joy. From Spacewar to Pong, creative play made computers personal.

Networks, Collaboration, and Openness

J. C. R. Licklider’s dream of man‑computer symbiosis and an “intergalactic network” drove ARPA to fund time‑sharing, then the ARPANET. The first message—“Lo”—linked UCLA and SRI in 1969, launching distributed connection as a civic infrastructure. Tim Berners‑Lee later merged hypertext with the Internet, making the Web open and free (CERN’s public‑domain release). Mosaic’s graphical browser made the Web accessible, launching the publishing era of digital life. Simplicity shaped history: Andreessen’s visual focus shifted the Web toward read‑only mass communication rather than collaborative editing—until blogs, wikis, and Wikipedia reintroduced participatory authorship.

Economies, Communities, and the Cultural Web

Software became the true engine of value. Bill Gates and Paul Allen’s Altair BASIC formed Microsoft’s foundation for proprietary software; Stallman and Torvalds countered with GNU and Linux, emphasizing freedom and transparency. Meanwhile, modems and bulletin boards democratized access—ordinary homes joined the digital commons. When AOL and CompuServe attracted millions, cultural shock followed: 1993’s “Eternal September” symbolized the irreversible public flooding into cyberspace. Berners‑Lee’s open protocols ensured that anyone could publish, and Google’s PageRank algorithm later organized the chaos—turning collective human linking into machine intelligence.

The Persistent Idea: Symbiosis

Across all eras, one insight repeats: technology grows not through replacement, but through partnership. Ada Lovelace’s caution—that machines do not originate ideas—remains prophetic. Modern AI systems from Deep Blue to Watson amplify rather than replicate human insight. Licklider’s and Engelbart’s visions of augmentation endure in collaborative computing, search engines, and neural networks. Ultimately, innovation is cultural, institutional, and poetic. The computer’s story is our story—how we encode imagination into machinery and, in doing so, remake ourselves.