Idea 1

AI’s Trajectory and the Two Power Tracks

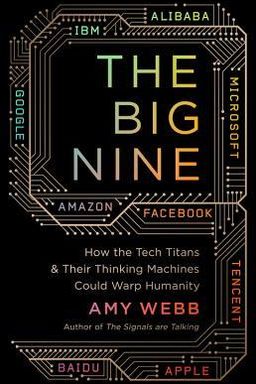

How can you shape the future of artificial intelligence when it’s already shaping you? In The Big Nine, Amy Webb argues that AI’s evolution is neither accidental nor universal. It is being directed by two distinct geopolitical and cultural power tracks: the U.S.‑centric, market‑driven G‑MAFIA (Google, Microsoft, Amazon, Facebook, IBM, Apple) and the Chinese, state‑aligned BAT (Baidu, Alibaba, Tencent). Each bloc operates under different incentive systems—one guided by shareholder returns, the other by centralized industrial policy—and those differences are rewriting economic, social, and ethical norms around the world.

Two models, two futures

In the United States, AI is treated as a commercial product. The G‑MAFIA are rewarded for fast iteration, not careful governance. Webb calls this a nowist mindset: short‑term thinking that favors investor confidence and user growth at the cost of long‑term civic health. You live this every time your feed updates, your ad preferences are optimized, or privacy concessions are quietly folded into new features. In contrast, China’s BAT operate within a long‑range, state‑coordinated vision. The government’s 2030 AI Development Plan fuses national security, economic planning, and social management. Citizens’ data—gleaned through platforms like WeChat and ET City Brain—fuel integrated surveillance and governance systems.

These tracks are not just national differences; they are competing philosophies of what it means to be human in a data‑driven world. The G‑MAFIA turn behavior into commercial prediction. BAT systems convert behavior into compliance metrics. Both demand your data, but for opposite reasons: profit versus control.

The stakes for citizens and governance

This bifurcation matters because it shapes the rules you live under whether or not you notice. If you live within U.S. systems, algorithms quietly nudge you for engagement—likes, clicks, purchases. Under China’s systems, AI optimizes for social harmony and policy compliance. Yet in the global economy you already straddle both worlds: your phone, cloud storage, supply chains, and travel data touch both ecosystems. These systems affect how loans are approved, who is hired, which stories trend, and how health decisions get automated.

Why Webb calls for foresight

Webb warns that short‑term market logic and authoritarian optimization each lead to dangerous lock‑ins. The West risks monopolistic complacency; China risks digital authoritarianism. Without intervention, both paths converge toward diminished human agency—one through addiction to convenience, the other through dependence on state infrastructure. To bridge this, Webb proposes global coordination, calling on governments and companies to treat AI as a public good rather than just a product or weapon.

Preview of what follows

The chapters that follow expose why AI systems inherit the blind spots of their creators, why hardware and infrastructure matter as much as algorithms, and how fragile systems can generate cascading harms. Webb moves from history and culture to possible futures—showing that between utopia and catastrophe lies a range of plausible, human‑guided outcomes. The responsibility, she insists, lies with you and your institutions: to question whose values are encoded, demand transparency, and change the incentives behind the code.

Core claim

AI is not an autonomous runaway technology; it is the aggregate expression of human priorities and politics. Until we design governance and educational systems that reflect diverse, long‑term human values, AI will continue to mirror—and magnify—the inequality, bias, and short‑termism of those who build it.